Crowd Workers Are an Integral Piece of the Ethical AI Puzzle – Part 4

Building ethical AI isn’t a one-and-done checkbox-marking exercise – it’s a continual process made up of many nuanced considerations and decisions. It’s therefore not only our responsibility to build AI models that are robust to bias, but also to train and maintain them with unbiased, representative data to begin with. That’s why we must be mindful of our data collection practices and in particular, the role of crowd sourcing platforms in collecting that data.

In the conclusion to our series on crowd work and its place in the ethical AI life cycle, we examine the current lack of legal protections for crowd workers, the role of privacy on the platforms, and how we must navigate these sensitive issues to be on the right side of both future government policy and workers’ preferences.

The rights of crowd workers: autonomy and privacy

Another criticism is the precarity of crowd work. This is exacerbated by its novelty which has resulted in the subsequent vague definition of gig work and the dearth of regulation around it. While it’s true that crowd workers currently lack the same labor protections as part- or full-time workers, it’s important to note that platform work is attractive due to its autonomy and flexibility of schedule, workload, and commitment – things that are much less easy to negotiate at will with regular part- and full-time labor. While it is nonetheless paramount to protect crowd workers and their rights, it would be prudent to explore ways of doing so without sacrificing the level of autonomy and flexibility that draws them to crowd work in the first place.

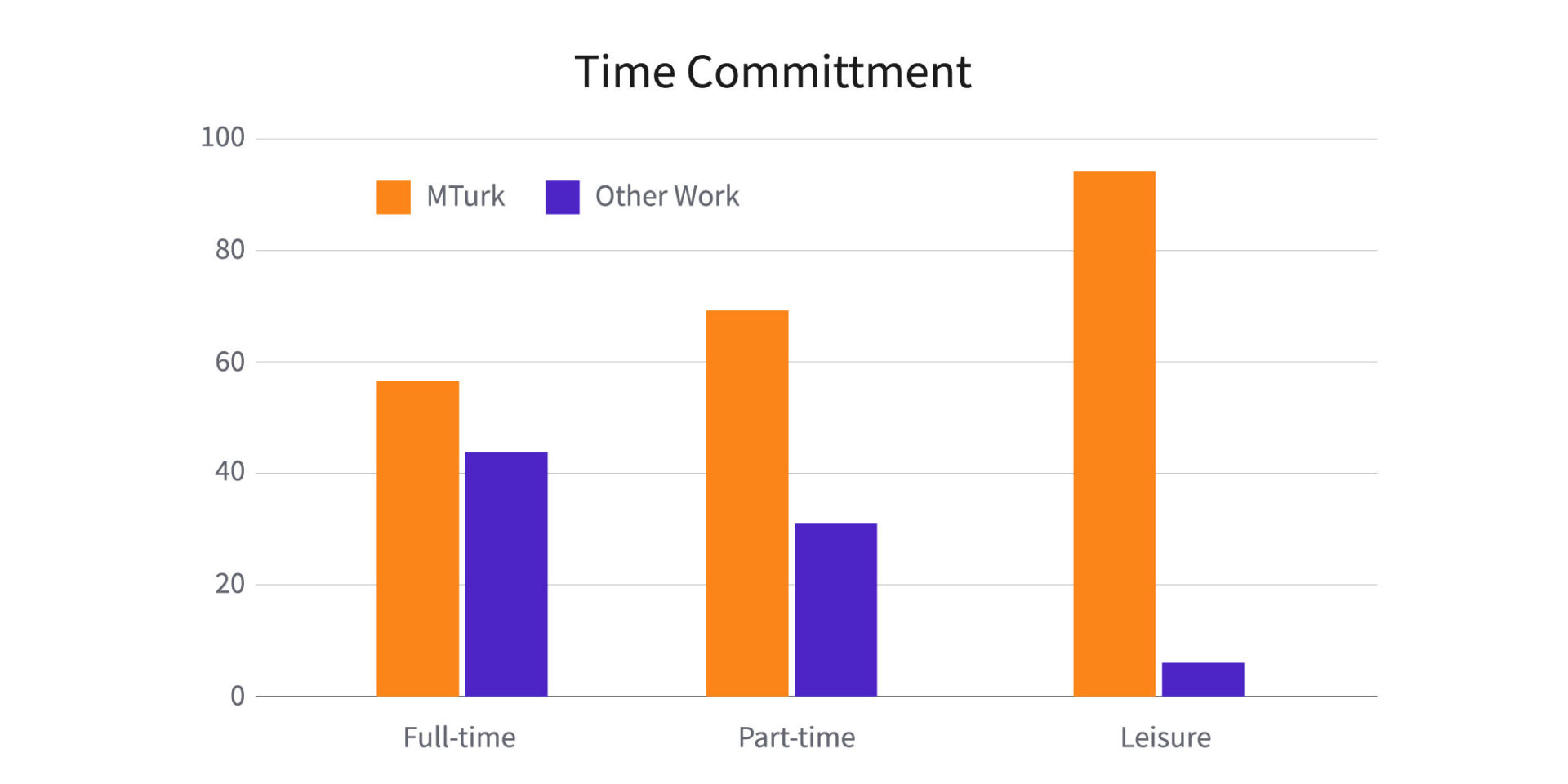

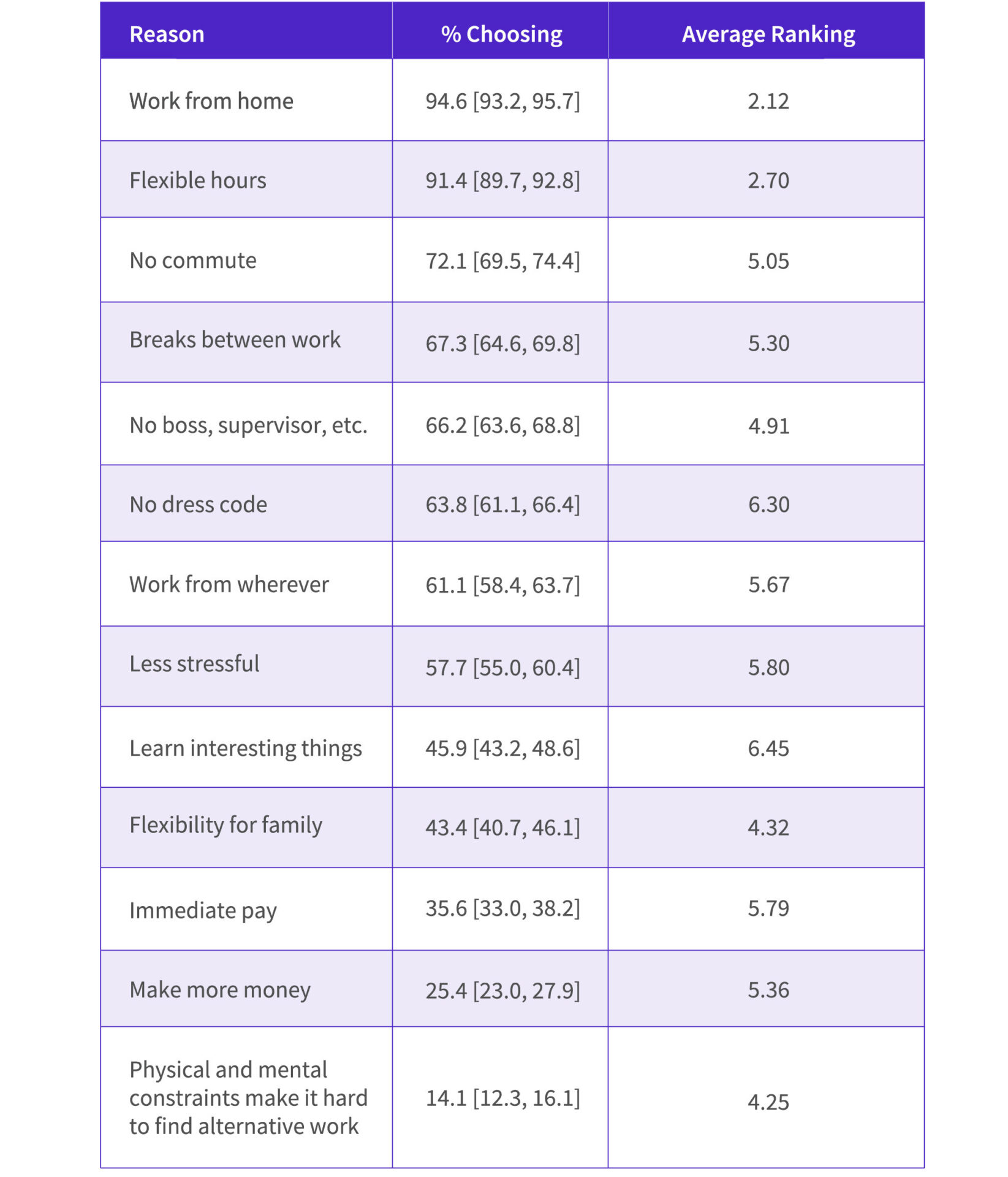

Illustrating this is Moss et al’s Is it Ethical to Use Mechanical Turk for Behavioral Research? , which surveys over 4000 crowd workers on Amazon’s Mechanical Turk platform, the results of which found that MTurk workers – and crowd workers in general, by extension – are a self-selecting population that prefer MTurk due to its benefits over traditional work, even if both roles are paid at the same rate. One telling table from the paper shows as much, breaking the preference proposition down further to full-, part-time, and leisure time work:

The chief reasons cited were for MTurk’s flexibility of hours, being able to work from home, and the lack of a commute. Other notable reasons however were that MTurk allowed 14 percent of surveyed workers the ability to work at all due to “physical and mental constraints [making] it hard for me to find an alternative work,” and 43 percent choosing it for its flexibility for their family lives – both understandable and familiar reasons given the current push by many to transition to remote work in the post-pandemic era.

For its part, in Defined.ai’s pursuit of collecting the most diverse and representative data, Neevo actively courts and employs crowd workers with disabilities to ensure that our collections contain their contributions – how else would one make AI accessible if not by making these populations seen and represented in training and evaluation data?

While there are a population of workers who rely on crowd work as their primary source of income and are thus most vulnerable to disruptions in work and nebulous legal protections, the freedom of crowd work – among other benefits – is a powerful draw, regardless.

A perhaps less mentioned issue of contention is that of privacy. The debate between the privacy of consumers and online businesses has slowly but steadily solidified in consumers’ favor, particularly with legislation such as the GDPR, however the debate continues between employees and employers – at what point is does data collection on and from employees become a violation, and what responsibilities do employers have to their employees to maintain their privacy? Further clouding this issue is that what little has been addressed in this space has also been of secondary priority to pay, protections, status, and open lines of communication.

Getting ahead of the debate however, Defined.ai has adopted the privacy protections of the GDPR and the protocols required for ISO certification, ensuring that any identifying biographical data of our crowd workers are fully protected, while also anonymizing and securing the data handled and reviewed between workers and requesters. The information flow between requesters and workers is thus limited to demographic distributions for dataset metadata, as well as what information is strictly necessary for task completion. While privacy has the potential to conflict with demands for more open lines of communication, Neevo staff are thus integral as an interface for crowd workers, relaying and clarifying information between requesters and the crowd while still respecting GDPR regulations, ISO processes, and the demands of requester NDAs.

The race has only just begun

While Defined.ai has done much in its mission to make crowd work an equitable and fair occupation, we acknowledge that there’s still a ways to go, and we’re relentlessly working on improvement. Issues encompassing workers’ rights and their legal standing under the law are open questions as we wait to see what policymakers decide to do. However, with significant inroads in worker pay, training, and privacy, we’ve earned ourselves a huge head-start, and hope that whatever the future may bring, it continues to allow workers the autonomy and freedom they value from crowd work in the first place.

To reiterate, ethical AI isn’t a checkbox-marking activity, it’s a process and a choice. Defined.ai believes in a future built on ethical AI, and so it’s our choice to invest in that future and those that make it possible. We’ve already done so much and plan to do much more to as practice and policy evolves, hopefully making crowd work something we would one day want our children to participate in.

Join us at the head of the pack in committing to and fighting for that future by ensuring your data is ethically collected.

References:

Kittur, Aniket, Nickerson, Jeffrey V., Bernstein, Michael S., Gerber, Elizabeth M., Shaw, Aaron, Zimmerman, John, Lease, Matthew, & Horton, John J. (2013, February 23). The Future of Crowd Work. https://hci.stanford.edu/publications/2013/CrowdWork/futureofcrowdwork-cscw2013.pdf

Moss, A. J., Rosenzweig, C., Robinson, J., Jaffe, S. N., & Litman, L. (2020, April 28). Is it Ethical to Use Mechanical Turk for Behavioral Research? Relevant Data from a Representative Survey of MTurk Participants and Wages. https://doi.org/10.31234/osf.io/jbc9d